![]()

![]()

![]()

Use LEFT and RIGHT arrow keys to navigate between flashcards;

Use UP and DOWN arrow keys to flip the card;

H to show hint;

A reads text to speech;

32 Cards in this Set

- Front

- Back

|

Relative risk =

|

Iold/Inew

|

|

|

Odds ratio=

|

Oddsold/Oddsnew

|

|

|

Absolute risk reduction

|

Iold - Inew

|

|

|

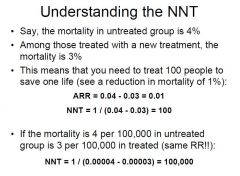

NNT =

|

1/(Iold - Inew)

OR 1/ARR |

|

|

What is efficacy? How is it calculated?

|

how much of the risk in control group is reduced by the new treatment.

ARR/Iold |

|

|

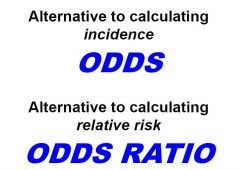

The odds ratio is an alternative to ___

|

RR

|

|

|

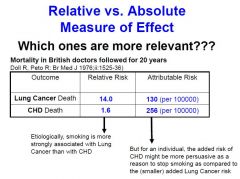

You can use relative or absolute measures of effect. Explain and when is each one useful.

|

Relative are very informative for causation strength.

Absolute is very informative with regards to the total risk reduction associated with a treatment |

|

|

Do relative or absolute measures measure the magnitude?

|

absolute

|

|

|

What allows you to compare both relative and absolute measures?

|

efficacy

|

|

|

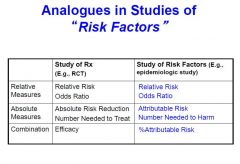

Relative or absolute?

1. most relevant for etiology. 2. attributable risk. 3. most relevant to evaluate actual treatment/risk to exposed individuals. |

1. relative.

2. absolute 3. absolute |

|

|

why can't you only analyze the treatment of actual treatment when there is crossover?

|

violate principles of random

|

|

|

besides upholding randomization, why is it important to use intention to treat for analysis?

|

because crossover may be heavily due to adverse effects of treatment

|

|

|

List the three main limitations of randomized clinical controlled trials.

|

not always feasible of ethical.

limited generalizability. statistical uncertainty |

|

|

what is an alternative to incidence? to relative risk?

|

|

|

|

explain NNT.

|

|

|

|

compare relative, absolute, and combination factors.

|

|

|

think about when each is relative.

|

good work.

|

|

|

what is a parameter

|

When you want to know if one treatment is better, you want to know by how much this treatment is better!

|

|

|

is standard error bigger or smaller if you have a big sample size

|

smaller

inverse relationship |

|

|

describe type one error

|

when you think there is a difference when in fact there is not

associated with false positive and p value |

|

|

describe type II error

|

when you think there is no difference when in reality there IS!

associated with false negative and beta probability |

|

|

is type I or type II error more common? why?

|

type I error is more common because there is only one null hypothesis and many alternative hypotheses!

|

|

|

what is the null hypothesis in the case of ARR? In RR?

|

0

1 |

|

|

how do you account for type I error?

|

in your pvalue

|

|

|

give the wording for the p value

|

the probability that the difference in our findings this large or larger from the null hypothesis would only occur __% of the time IF the null were in fact true

|

|

|

in order to find your pvalue what do you do to z?

|

you double it

|

|

|

give a definition for confidence interval

|

the range of values in which you would find your mean parameter 95% of the time

|

|

|

what can the CI NOT include if you want to say your null is untrue

|

you can't include your null hypothesis value

|

|

|

what three things does statistical power depend on?

|

magnitude of findings

set alpha value sample size |

|

|

how do you caluclate chi square

|

sum of ....(O-E)^2 / E

|

|

|

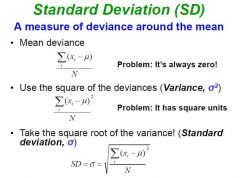

how do you calculate SD?

|

like this!

|

|

|

how do you relate a p value and a z score?

|

Find z. Double it. This is P!

|